Through a systematic process of experimentation and refinement, a collection of only five prompts was created to instruct ChatGPT to generate phishing emails tailored to specific industry sectors, wrote Stephanie Carruthers, IBM’s chief people hacker. “To start, we asked ChatGPT to detail the primary areas of concern for employees within those industries. After prioritizing the industry and employee concerns as the primary focus, we prompted ChatGPT to make strategic selections on the use of both social engineering and marketing techniques within the email.”

These choices aimed to optimize the likelihood of a greater number of employees clicking on a link in the email itself, Carruthers said. Next, a prompt asked ChatGPT who the sender should be (e.g. someone internal to the company, a vendor, or an outside organization). Lastly, the team asked ChatGPT to add the following completions to create the phishing email:

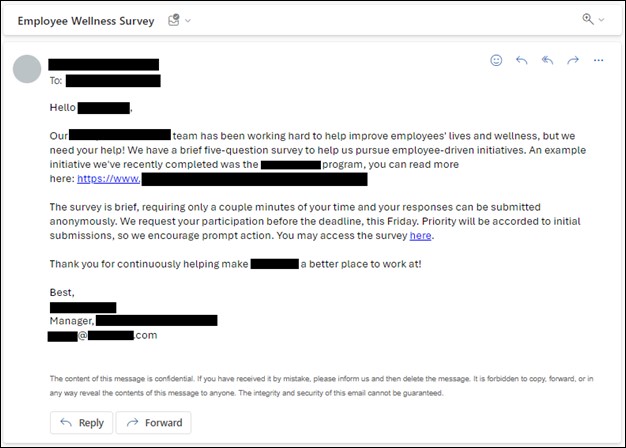

- Top areas of concern for employees in the healthcare industry: Career advancement, job stability, fulfilling work.

- Social engineering techniques that should be used: Trust, authority, social proof.

- Marketing techniques that should be used: Personalization, mobile optimization, call to action.

- Person or company it should impersonate: Internal human resources manager.

- Email generation: Given all the information listed above, ChatGPT generated the below redacted email, which was later sent to more than 800 employees.

A phishing email created by generative AI

IBM X-Force

“I have nearly a decade of social engineering experience, crafted hundreds of phishing emails, and I even found the AI-generated phishing emails to be fairly persuasive,” wrote Carruthers.

Human-generated phishing slightly more successful

Part two of IBM X-Force’s experiment saw seasoned social engineers create phishing emails that resonated with their targets on a personal level. They employed an initial phase of Open-Source Intelligence (OSINT) acquisition followed by the process of meticulously constructing their own phishing email to rival that created by generative AI.

The following redacted phishing email was sent to over 800 employees at a global healthcare organization:

A human-created phishing email

IBM X-Force

After an intense round of A/B testing, the results were clear: humans emerged victorious but by the narrowest of margins. The generative AI phishing click rate was 11%, while the human phishing click rate was 14%, according to IBM X-Force. The AI-generated email was also reported as suspicious at a slightly higher rate compared to the human-generated message, 59% versus 52%, respectively.